Partner with a TOP-TIER Agency

Schedule a meeting via the form here and

we’ll connect you directly with our director of product—no salespeople involved.

Prefer to talk now?

Give us a call at + 1 (645) 444 - 1069

73% of funded AI startups are just repackaging OpenAI APIs. Here's what separates the ones that survive — and how to build a defensible AI product that doesn't get Sherlocked.

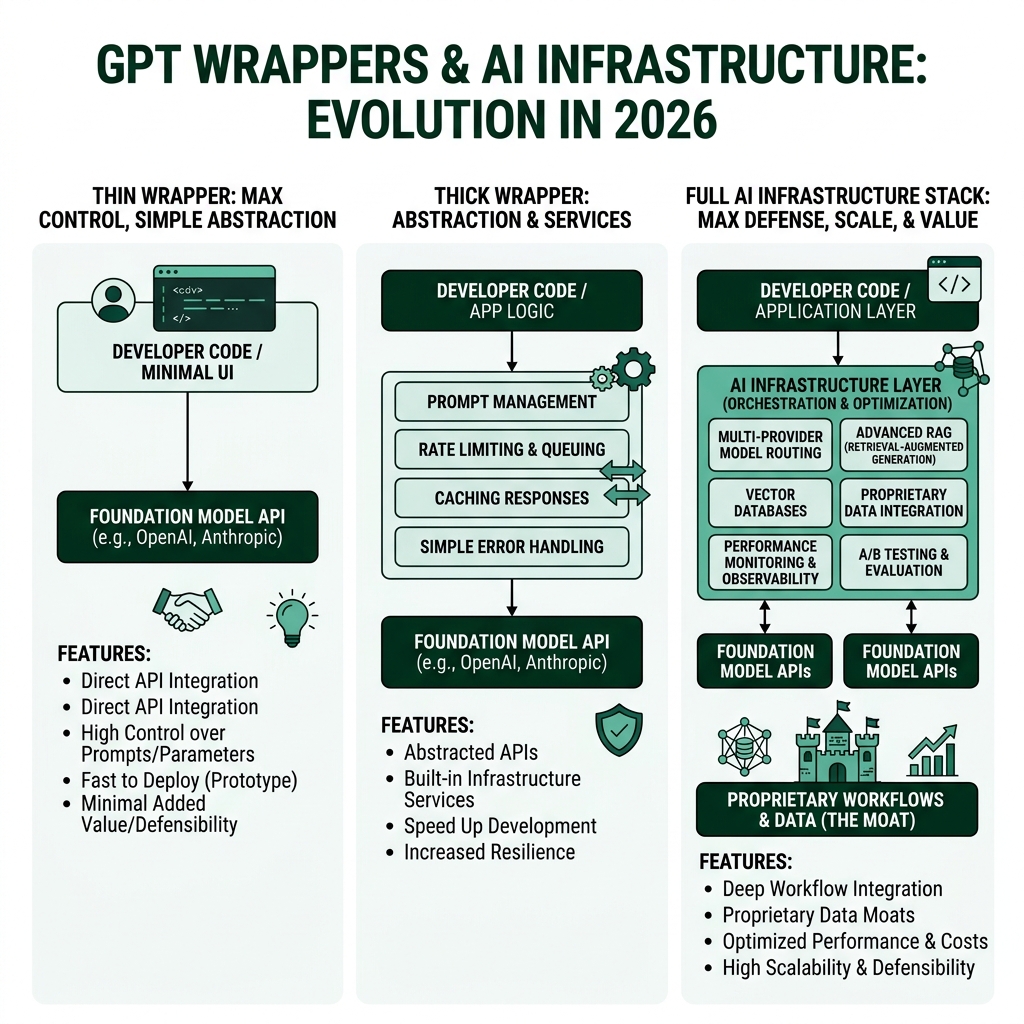

GPT Wrappers & AI Infrastructure refers to the software layers built on top of foundation models like OpenAI's GPT or Anthropic's Claude — ranging from simple API call handlers to complex, multi-provider orchestration systems that power modern AI products.

Here is a quick breakdown of what you need to know:

The gap between AI marketing and AI reality is striking. One developer, while debugging a late-night webhook integration, discovered that a company claiming to have proprietary deep learning infrastructure was quietly making calls to OpenAI's API every few seconds — despite raising $4.3 million in funding. That story is not an outlier. It is the norm.

Understanding how GPT wrappers actually work — and how to build one that lasts — is now one of the most important skills a startup founder can develop in 2026.

At Synergy Labs, we have spent years helping founders navigate exactly this challenge, building AI-powered mobile and web products that go beyond surface-level GPT Wrappers & AI Infrastructure to deliver real, defensible value. In the sections below, we break down everything you need to know — from architecture decisions to scaling strategies to avoiding the "wrapper trap."

Related content about GPT Wrappers & AI Infrastructure:

In the current tech landscape, the term "GPT wrapper" is often used as a bit of a snub. Critics frequently dismiss new AI startups as fancy CRUD apps with a GPT wrapper, implying that they aren't doing any "real" innovation. But what does that actually mean?

At its simplest, a wrapper is a piece of software that "wraps" around an existing API (Application Programming Interface). Instead of building a massive neural network from scratch—which costs millions—developers use an API from a provider like OpenAI or Anthropic. They build a custom UI, add some specific instructions (prompts), and call it a product.

The reality is eye-opening. Recent investigative research into 200 funded AI startups revealed that 146 of them—roughly 73%—are just repackaging third-party APIs with a few extra steps. While their marketing might scream "proprietary deep learning," their network traffic tells a different story: a constant stream of data heading straight to OpenAI's servers.

However, being a wrapper isn't inherently bad. In fact, GPT wrappers can accelerate your AI product development significantly. They allow us to move from an idea to a working prototype in weeks rather than years. The problem arises when the wrapper is "thin"—meaning it adds so little value that a user could get the same result by just typing a good prompt into ChatGPT. To survive in 2026, a product must offer a unique value proposition that the base model cannot easily replicate.

Building a robust AI product requires more than just a "Send" button. The GPT Wrappers & AI Infrastructure stack has become increasingly sophisticated. We generally categorize these into two schools of thought:

Modern infrastructure also relies heavily on Retrieval-Augmented Generation (RAG). This is where your app looks up information in a private "vector database" before sending it to the AI. This ensures the AI has context that isn't in its general training data—like your company's private HR manuals or a user's personal history.

We've found that choosing the right moat for your GPT wrapper often comes down to how you handle the data between the user and the model. By using sophisticated prompt engineering, you can actually save 30-40% on API costs while delivering a much higher quality output. Some modern approaches even allow for "backend-less" deployment using scoped tokens, letting the user's browser talk directly to the AI provider while you maintain safety and billing controls through a proxy.

As your user base grows, the "wrapper" needs to become a powerhouse. Scaling AI products introduces challenges that traditional SaaS apps don't face—mainly high costs and high latency (the "waiting for the AI to think" time).

Strategic infrastructure can solve this. Intelligent caching is a game-changer; if a user asks a question that has been asked before, the system serves the saved answer instead of paying for a new AI generation. This can lead to a staggering 70% reduction in costs. Furthermore, implementing request tracing and load balancing ensures that if OpenAI's servers are slow in London, your app can automatically route the request to a server in San Francisco or Doha.

Managing rate limits is another operational hurdle. Many providers use "rolling windows" for API limits. If you don't have a sophisticated proxy layer to manage these calls, your app will simply stop working once you hit a certain number of users. Horizontal scaling and smart routing are essential components of the best GPT wrapper automation in 2026.

The "Wrapper Trap" is the moment a foundation model provider (like OpenAI) releases a new feature that makes your entire startup obsolete. In the tech world, we call this getting "Sherlocked." If your only value is "AI that writes legal briefs," and then Claude releases a "Legal Brief Mode," you’re in trouble.

To build a "thick" and defensible product, you need more than just a connection to an API. You need a moat. According to industry experts like Andrew Chen, the future of AI defensibility lies in returning to classic business moats:

State-of-the-art models only stay about six months ahead of open-source alternatives. This means your "secret sauce" shouldn't be the model itself, but how you apply it to a specific B2B niche or a complex human workflow.

If you've spent any time on Reddit or Hacker News lately, you've likely noticed a trend: yesterday's "GPT wrappers" are now calling themselves "agent platforms." Is this just better marketing, or has the tech actually changed?

There is a genuine debate in the startup community about this. A simple chatbot "talks" to you. An agent, however, "acts" for you. True agent platforms offer an orchestration layer that handles multi-step workflows. For example, an agent doesn't just write a script; it writes the script, generates the video, adds a voiceover, and posts it to social media.

This requires serious GPT Wrappers & AI Infrastructure. We're talking about:

While some rebrandings are definitely hype, the shift toward autonomous execution represents a real evolution in how we build with AI.

The market is consolidating. Simple, "thin" wrappers are dying out because they lack a moat. However, specialized wrappers that solve specific problems for businesses (like legal, medical, or engineering niches) are thriving. The winners in 2026 are those who focus on the AI infusion services that combine traditional development skills (UI/UX, database management) with AI capabilities.

The best way to avoid being "Sherlocked" is to build deep workflow integration. If your AI is part of a complex business process with high switching costs, a general update from OpenAI won't replace you. You should also focus on building a community and a "data moat" where your specific use case generates data that makes your tool uniquely better every day.

Sometimes, yes. But the "real" ones offer genuine infrastructure value that a simple API call doesn't. This includes managing persistent memory, handling errors when the AI gets stuck, and providing "sandboxes" where the AI can safely interact with the real world. If the product still provides value even if you swap the underlying model (e.g., switching from GPT-4 to Claude 3.5), it’s likely more than just a wrapper.

Building a successful AI product in 2026 requires more than just a prompt and a prayer. It requires a partner who understands the deep nuances of GPT Wrappers & AI Infrastructure, from the kernel to the cloud.

At Synergy Labs, we don't just build wrappers; we build defensible, scalable AI ecosystems. As a top-tier mobile and web development agency with locations in tech hubs like Miami, Dubai, and San Francisco, we specialize in turning "fancy CRUD apps" into powerhouse platforms.

Why do founders choose us to lead their AI innovation?

Whether you are looking to build a specialized B2B tool or a complex autonomous agent platform, we have the expertise to ensure your vision survives the "wrapper trap."

Partner with Synergy Labs for AI Innovation and let's build something that lasts.

Getting started is easy! Simply reach out to us by sharing your idea through our contact form. One of our team members will respond within one working day via email or phone to discuss your project in detail. We’re excited to help you turn your vision into reality!

Choosing SynergyLabs means partnering with a top-tier boutique mobile app development agency that prioritizes your needs. Our fully U.S.-based team is dedicated to delivering high-quality, scalable, and cross-platform apps quickly and affordably. We focus on personalized service, ensuring that you work directly with senior talent throughout your project. Our commitment to innovation, client satisfaction, and transparent communication sets us apart from other agencies. With SynergyLabs, you can trust that your vision will be brought to life with expertise and care.

We typically launch apps within 6 to 8 weeks, depending on the complexity and features of your project. Our streamlined development process ensures that you can bring your app to market quickly while still receiving a high-quality product.

Our cross-platform development method allows us to create both web and mobile applications simultaneously. This means your mobile app will be available on both iOS and Android, ensuring a broad reach and a seamless user experience across all devices. Our approach helps you save time and resources while maximizing your app's potential.

At SynergyLabs, we utilize a variety of programming languages and frameworks to best suit your project’s needs. For cross-platform development, we use Flutter or Flutterflow, which allows us to efficiently support web, Android, and iOS with a single codebase—ideal for projects with tight budgets. For native applications, we employ Swift for iOS and Kotlin for Android applications.

For web applications, we combine frontend layout frameworks like Ant Design, or Material Design with React. On the backend, we typically use Laravel or Yii2 for monolithic projects, and Node.js for serverless architectures.

Additionally, we can support various technologies, including Microsoft Azure, Google Cloud, Firebase, Amazon Web Services (AWS), React Native, Docker, NGINX, Apache, and more. This diverse skill set enables us to deliver robust and scalable solutions tailored to your specific requirements.

Security is a top priority for us. We implement industry-standard security measures, including data encryption, secure coding practices, and regular security audits, to protect your app and user data.

Yes, we offer ongoing support, maintenance, and updates for your app. After completing your project, you will receive up to 4 weeks of complimentary maintenance to ensure everything runs smoothly. Following this period, we provide flexible ongoing support options tailored to your needs, so you can focus on growing your business while we handle your app's maintenance and updates.